If you are interested in learning more about training robots using machine learning techniques and technologies you might be interested in a new Arduino project to ascertain whether a basic robot could navigate using only LiDAR, as opposed to more resource-intensive computer vision techniques.

LiDAR, or Light Detection and Ranging, is a remote sensing method that uses light in the form of a pulsed laser to measure distances. It’s a technology that has been gaining traction in the field of autonomous vehicles and robotics, and this project aimed to push its boundaries further and is demonstrated by YouTuber Nikodem Bartnik with a LiDAR equipped mobile robot.

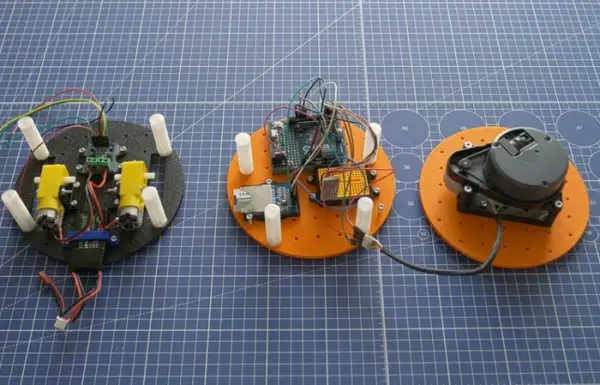

The robot’s hardware was constructed in accordance with the Open Robotic Platform (ORP) rules. This included two DC motors, an UNO R4 Minima, a Bluetooth module, and an SD card. The adherence to ORP rules ensured that the robot was built on a platform that promotes open-source hardware and software, fostering innovation and collaboration in the robotics community.

Robot machine learning

Bartnik’s approach to training the robot was meticulous and innovative. He collected a point cloud from the spinning lidar sensor by manually driving the robot through a series of courses. This data was then imported and transformations were performed to minify the classification model. This process of data collection and transformation was crucial in training the robot to recognize and navigate obstacles.

Training robots using machine learning

The foundational concept involves integrating machine learning algorithms into robotic systems to enable them to adapt to varying educational needs and contexts. These robots can be designed to perform tasks such as tutoring in specific subjects, assisting with hands-on experiments, or facilitating group activities. Importantly, the adaptive nature of machine learning allows these robots to learn from student interactions, thereby continuously improving their educational efficacy.

The training process usually comprises data collection, model selection, and iterative refinement. Initially, the robot is trained using a dataset that may include student responses, facial expressions, or other interaction metrics. Algorithms such as decision trees, neural networks, or reinforcement learning can be used to build the model. The trained model is then deployed into the robot, which starts interacting with students. Feedback loops are essential here: As the robot engages with students, new data is collected and used to update the model, enabling a cycle of continuous improvement.

The workflow typically includes the following stages: data collection, data preprocessing, model selection, training, evaluation, and deployment.

- Data Collection: The first step is gathering data that the machine learning algorithm can learn from. This data can come from various sources such as sensors on the robot, human-robot interactions, or even simulated environments. The type of data collected depends on the task the robot is designed to perform. For instance, if the robot is being trained for object recognition, you might collect images of the objects from various angles and lighting conditions.

- Data Preprocessing: Raw data usually needs to be cleaned and transformed to be useful. This might involve normalizing sensor readings, annotating images, or segmenting time-series data. The goal is to prepare the data in a way that makes it easier for the machine learning algorithm to identify patterns.

- Model Selection: The next step is choosing the appropriate machine learning algorithm. The choice depends on the nature of the problem, the type of data, and the computational resources available. Algorithms can range from simpler methods like linear regression and decision trees to complex ones like neural networks or reinforcement learning algorithms.

- Training: Once the model is selected, the actual training process begins. The algorithm uses the processed data to adjust its internal parameters. For supervised learning tasks, the model learns to map inputs (features) to outputs (labels) based on the training data. In unsupervised tasks, the model tries to learn the underlying structure of the data. In the case of reinforcement learning, the robot learns by interacting with its environment and receiving rewards or penalties.

- Evaluation: After training, the model’s performance is evaluated using a separate dataset that it hasn’t seen before, known as the validation or test set. Metrics such as accuracy, precision, and recall are commonly used to quantify the model’s effectiveness.

- Deployment: Once the model is trained and evaluated, it’s integrated into the robot’s control system. This enables the robot to make decisions, perform tasks, or interact with humans or other systems based on the learned patterns.

- Iterative Refinement: As the robot operates in the real world, additional data can be collected to further refine and update the machine learning model, allowing the robot to adapt to new conditions or tasks over time.

Source: Training an Arduino UNO R4 powered robot using machine learning