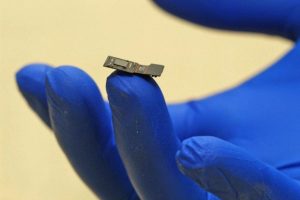

HOUSTON – (July 12, 2017) – Rice University engineers are building a flat microscope, called FlatScope TM, and developing software that can decode and trigger neurons on the surface of the brain.

Their goal as part of a new government initiative is to provide an alternate path for sight and sound to be delivered directly to the brain.

The project is part of a $65 million effort announced this week by the federal Defense Advanced Research Projects Agency (DARPA) to develop a high-resolution neural interface. Among many long-term goals, the Neural Engineering System Design (NESD) program hopes to compensate for a person’s loss of vision or hearing by delivering digital information directly to parts of the brain that can process it.

Members of Rice’s Electrical and Computer Engineering Department will focus first on vision. They will receive $4 million over four years to develop an optical hardware and software interface. The optical interface will detect signals from modified neurons that generate light when they are active. The project is a collaboration with the Yale University-affiliated John B. Pierce Laboratory led by neuroscientist Vincent Pieribone.

Current probes that monitor and deliver signals to neurons — for instance, to treat Parkinson’s disease or epilepsy — are extremely limited, according to the Rice team. “State-of-the-art systems have only 16 electrodes, and that creates a real practical limit on how well we can capture and represent information from the brain,” Rice engineer Jacob Robinson said.

Robinson and Rice colleagues Richard Baraniuk, Ashok Veeraraghavan and Caleb Kemere are charged with developing a thin interface that can monitor and stimulate hundreds of thousands and perhaps millions of neurons in the cortex, the outermost layer of the brain.

“The inspiration comes from advances in semiconductor manufacturing,” Robinson said. “We’re able to create extremely dense processors with billions of elements on a chip for the phone in your pocket. So why not apply these advances to neural interfaces?”

Read more: Flat microscope for the brain could help restore lost eyesight