As the immense value proposition of Edge Computing becomes more obvious, Chip manufacturers are working twice as hard to be the one that develops the SOC, FPGA, or MPU that provides designers with all that is needed to develop powerful Edge AI-based solutions.

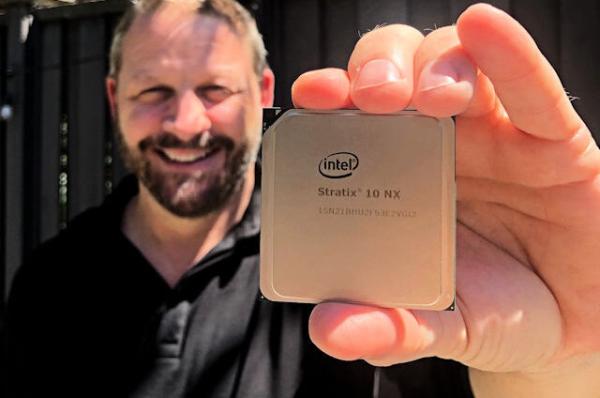

While a lot of progress has been made with MPUs and SOCs via impressive boards like the Google’s Coral and the intel compute stick, there are very few FPGA solutions out there with the kind of specifications required for Edge-AI. Taking a stab at this, Intel recently announced the launch of a new, AI-Optimized FPGA called the Stratix 10 NX.

The Stratix 10 NX is an AI-optimized FPGA, designed for high-bandwidth, low-latency AI acceleration applications. It delivers accelerated AI compute solution through AI-optimized compute blocks with up to 15X more throughput compared to the standard Intel Stratix 10 FPGA DSP Block. It provides an in package 3D stacked HBM high-bandwidth DRAM and up to 57.8G PAM4 transceivers.

The Stratix 10 NX comes with a unique combination of capabilities that provides designers with all that is needed to develop customized hardware with integrated high-performance AI. At the center of these capabilities is a new type of AI-optimized block called the AI Tensor Block, which is tuned for the common matrix-matrix or vector-matrix multiplications used in AI computations, with capabilities designed to work efficiently for both small and large matrix sizes. According to Intel’s spec sheet, a single AI Tensor Block achieves up to 15X more INT8 throughput compared to the standard Intel Stratix 10 FPGA DSP Block.

Read more: MEET THE STRATIX 10 NX FPGA: THE FIRST AI-OPTIMIZED FPGA FROM INTEL